How to Run a GPU Job

Follow these steps to run a GPU job on the Grid.

1. Log on to the Grid.

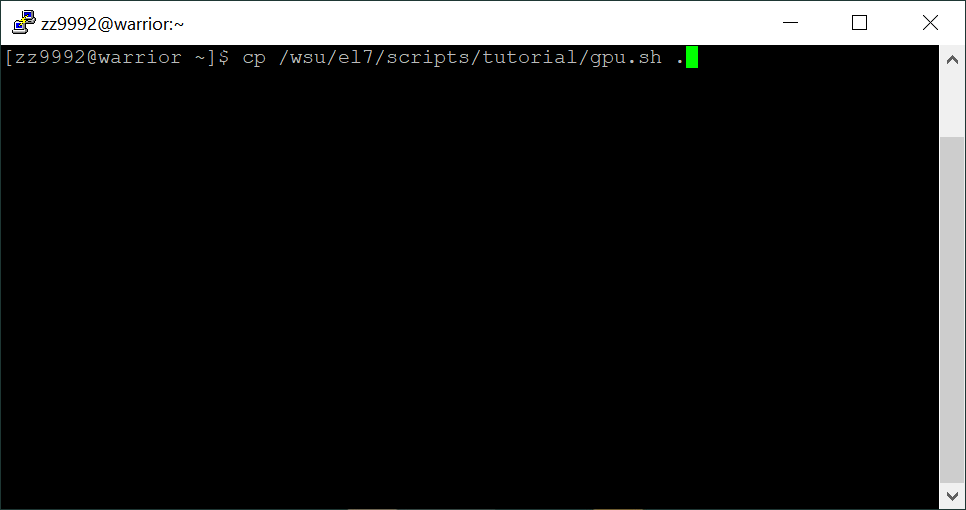

2. From your home directory, copy the GPU job script to your home directory by typing: cp /wsu/el7/scripts/tutorial/gpu.sh .

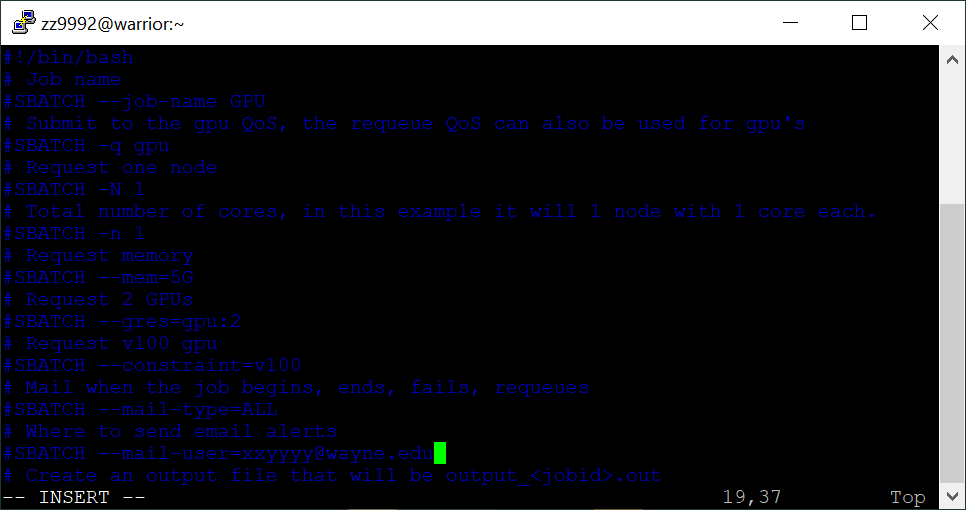

This is a sample script that can be edited to suit your needs. There's a comment for each line describing it.

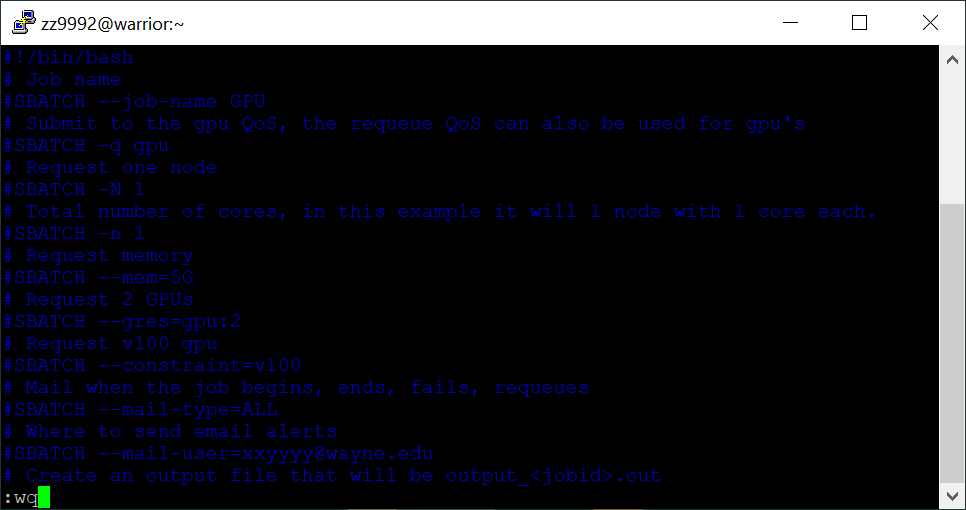

This is the contents of the script:

#!/bin/bash

# Job name

#SBATCH --job-name GPU

# Submit to the gpu QoS, the requeue QoS can also be used for gpu's

#SBATCH -q gpu

# Request one node

#SBATCH -N 1

# Total number of cores, in this example it will 1 node with 1 core each.

#SBATCH -n 1

# Request memory

#SBATCH --mem=5G

# Request 2 GPUs

#SBATCH --gres=gpu:2

# Request v100 gpu

#SBATCH --constraint=v100

# Mail when the job begins, ends, fails, requeues

#SBATCH --mail-type=ALL

# Where to send email alerts

#SBATCH --mail-user=xxyyyy@wayne.edu

# Create an output file that will be output_<jobid>.out

#SBATCH -o output_%j.out

# Create an error file that will be error_<jobid>.out

#SBATCH -e errors_%j.err

# Set maximum time limit

#SBATCH -t 1-0:0:0

# List assigned GPU:

echo Assigned GPU: $CUDA_VISIBLE_DEVICES

# Check state of GPU:

nvidia-smi

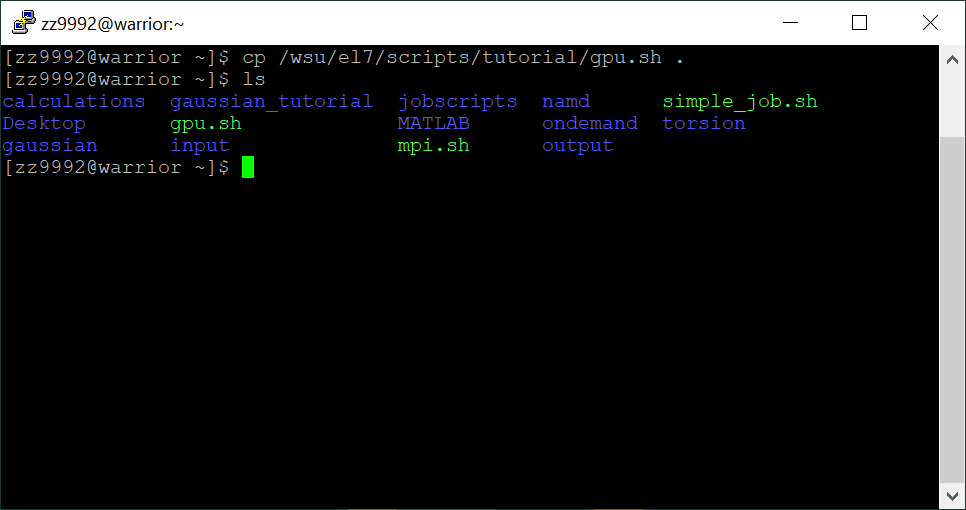

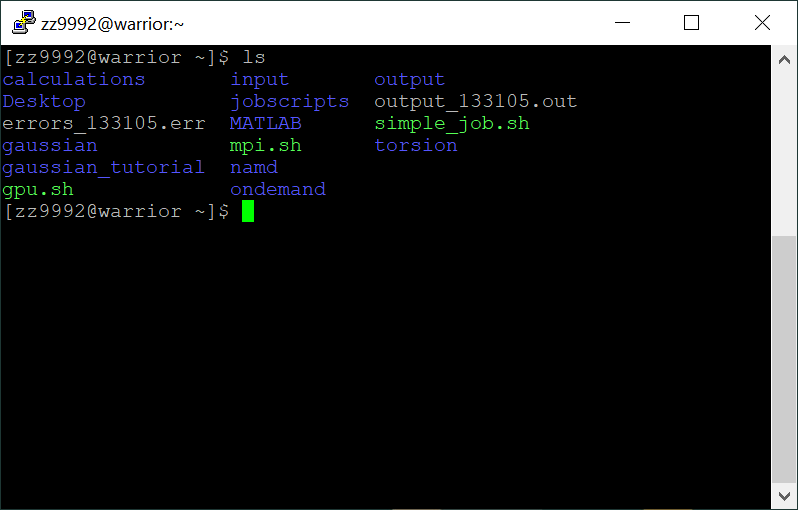

3. Check to see that the script is in your home directory by typing: ls

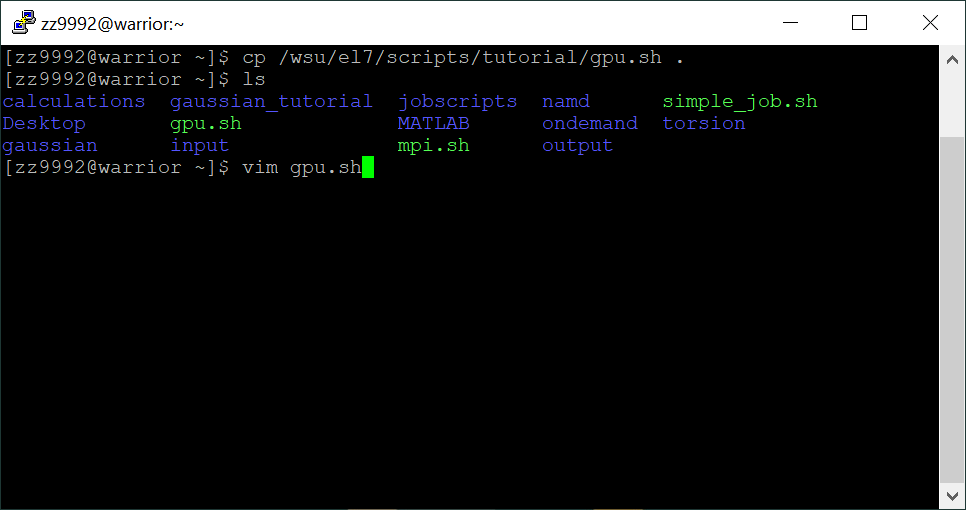

4. Edit the script with the vim text editor by typing: vim gpu.sh

Press 'i' to insert and edit, use the up and down keys to scroll through the script. Be sure to change the email address to your own.

Press ':wq' and then Enter to save and quit.

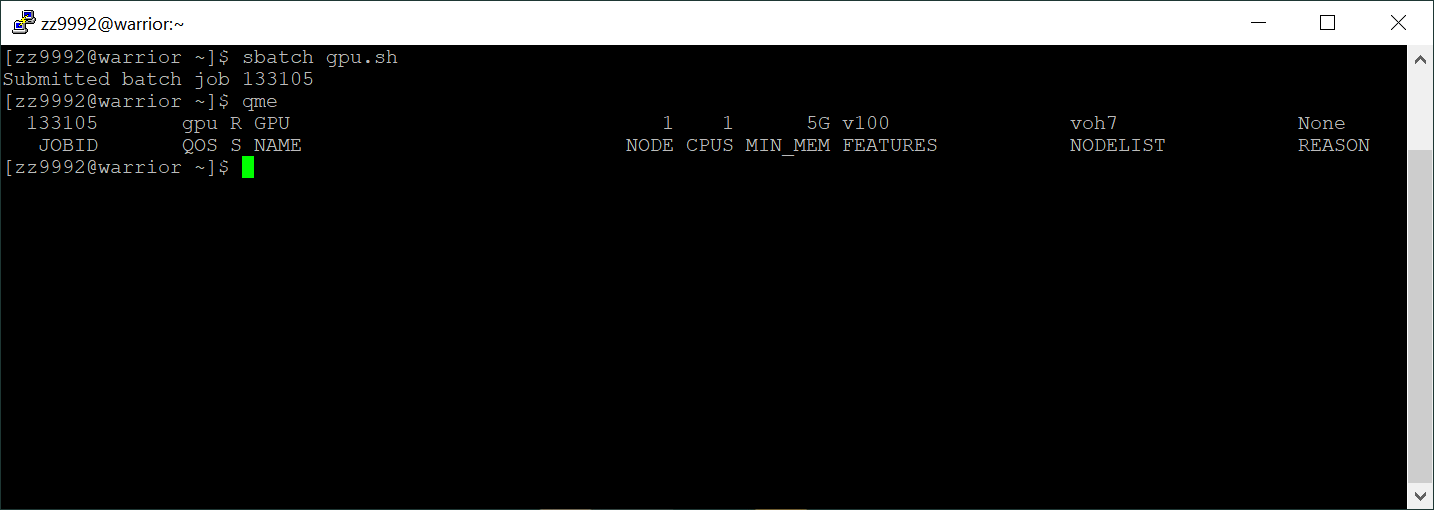

5. Run the GPU job script by typing: 'sbatch gpu.sh'. Check to see that your job is running by typing: qme

The output of qme gives the following: the job id, the QOS the job is running on, the state of the job, the job name, the number of nodes, the number of CPUs, the memory, features that were specified by constraints that the job was allocated, the nodelist, and if the job is not running, the reason why.

8. When your job is finished you should have an error and an output file (output_$JOBID.out and error_$JOBID.err) in your home directory, check by typing: ls

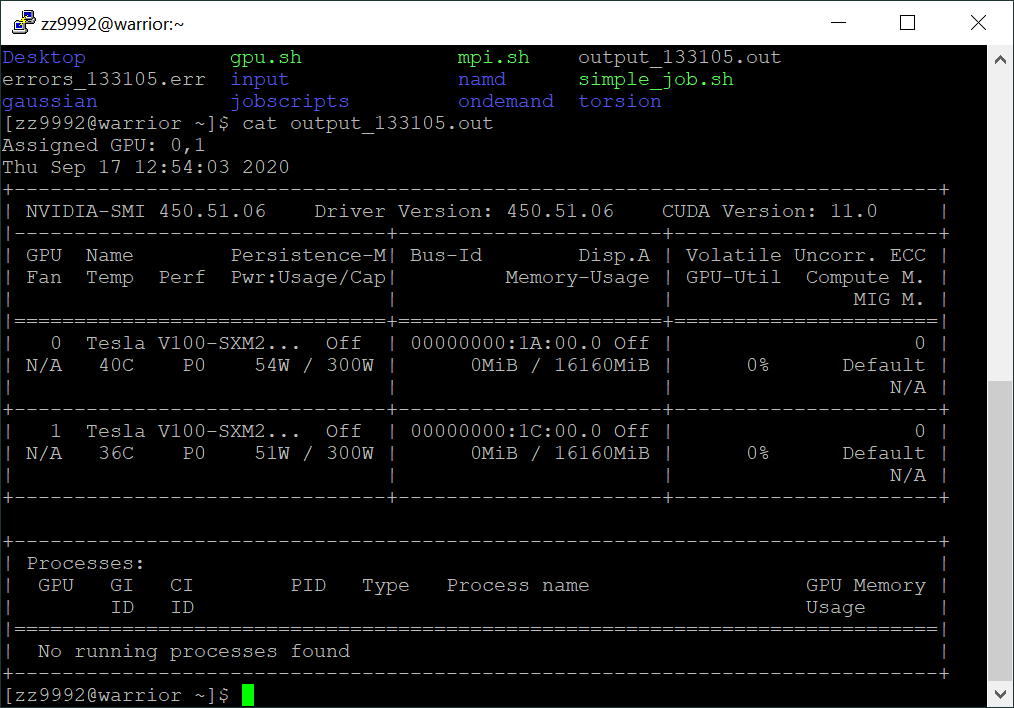

Check the contents of the output file, in this example the command would be: cat output_133105.out

The output from the script shows the GPU's that were assigned and their states.